Immersive Visualization / IQ-Station Wiki

This site hosts information on virtual reality systems that are geared toward scientific visualization, and as such often toward VR on Linux-based systems. Thus, pages here cover various software (and sometimes hardware) technologies that enable virtual reality operation on Linux.

The original IQ-station effort was to create low-cost (for the time) VR systems making use of 3DTV displays to produce CAVE/Fishtank-style VR displays. That effort pre-dated the rise of the consumer HMD VR systems, however, the realm of midrange-cost large-fishtank systems is still important, and has transitioned from 3DTV-based systems to short-throw projectors.

Difference between revisions of "VRVolVis"

m (Add ANARI Hackathon to 10/10/24 update) |

m (Updates for 11/07/24) |

||

| (2 intermediate revisions by the same user not shown) | |||

| Line 9: | Line 9: | ||

==Recent Results== | ==Recent Results== | ||

(Placed at the top for quick review) | (Placed at the top for quick review) | ||

===Upcoming Conferences=== | |||

* Preparing for two upcoming conferences | |||

** '''CAAV''' — November 11-14: Presenting on ParaView | |||

** '''SC'24''' — November 16-22: Bringing IQ-station to demo Immersive ParaView | |||

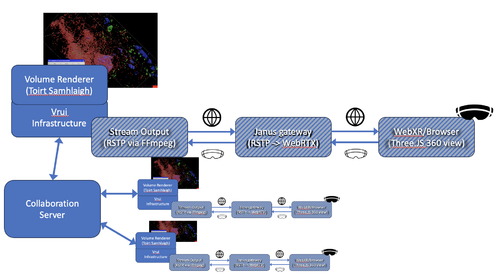

===Streaming Volume Rendering to WebXR=== | |||

* Developing a prototype of a streaming platform from ''Toirt Samhlaigh'' volume visualizer to WebXR | |||

** '''Challenges (11/07/24):''' | |||

*** Janus configuration and web interface has proven to be more difficult than anticipated | |||

**** SRTP was failing to connect stream to Janus server — switched to RTP stream | |||

**** Managed to get an audio stream to work | |||

**** Have not yet been able to get video stream to work | |||

*** Reconsidering whether this is the appropriate technology | |||

**: | |||

** Use the existing and working ''Toirt Samhlaigh'' immersive volume rendering tool | |||

*** Built on the Vrui VR infrastructure | |||

**** Existing collaboration feature | |||

**** Prototyping export to RSTP stream using FFmpeg API | |||

**** Will need to read head tracking data back from RSTP server | |||

**** '''DONE:''' Prototype code that taps into the HMD rendering prior to warping | |||

** TESTING: use ''Janus'' RSTP to WebRTX gateway server | |||

*** ''DONE:'' Built and configuring | |||

*** ''IN-PROGRESS:'' Create a WebRTC app that reads the stream | |||

** ''WebXR'' browser interface to user | |||

*** PROTOTYPING: Three.JS program | |||

**** Read/Render 360 image stream from WebRTC | |||

**** Provide head-tracking back to ''Toirt Samhlaigh'' via ''Janus'' | |||

[[File:Mayo_Toirt_Diagram.png|500px]] | |||

: NOTE: the stripped boxes are all "in-progress" (prototyping) stage | |||

===Some recent development highlights:=== | ===Some recent development highlights:=== | ||

* PARTIAL: '''Reverse Engineering the Volume Rendering algorithm in Toirt Samhlaigh''' | |||

** I've been delving into the Toirt Samhlaigh code that does the volume rendering | |||

** Adding comments as I understand it more | |||

** Have extracted the primary vertex and fragment shaders | |||

** Now building a small test program that distills the volume rendering | |||

** '''TODO:''' Learn how to transfer the volume rendering techniques to a new ANARI backend | |||

* '''Preparing for ANARI Hackathon (next week: October 21-23) | |||

** Revamping some ANARI code in my test code | |||

** Rebuilding all the ANARI code that has a new release in preparation for the Hackathon | |||

* '''Next Week:''' ANARI Hackathon (will consume most of my time) | |||

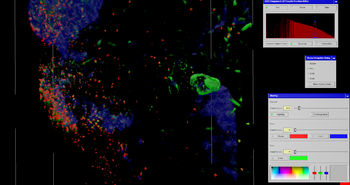

[[File:Screenshot_1.png|500px]] | [[File:Screenshot_1.png|500px]] | ||

* '''Exploring better volume rendering on WebXR/WebGL''' | * '''Exploring better volume rendering on WebXR/WebGL''' | ||

Latest revision as of 14:23, 7 November 2024

VR VolViz

This page describes various file formats, file conversion techniques and software that can be used to manipulate and render 3D volumes of data using volume-rendering techniques. Much of what is described here will easily work with single-scalar volumetric data, but challenges arise when there is a need for

- near-terabyte sized data,

- R,G&B volumetric (vector) data,

- rendering in real time for virtual reality (VR).

Recent Results

(Placed at the top for quick review)

Upcoming Conferences

- Preparing for two upcoming conferences

- CAAV — November 11-14: Presenting on ParaView

- SC'24 — November 16-22: Bringing IQ-station to demo Immersive ParaView

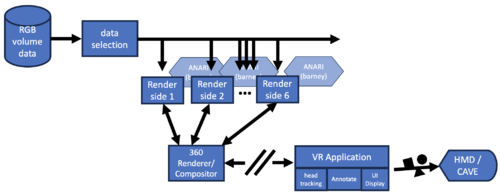

Streaming Volume Rendering to WebXR

- Developing a prototype of a streaming platform from Toirt Samhlaigh volume visualizer to WebXR

- Challenges (11/07/24):

- Janus configuration and web interface has proven to be more difficult than anticipated

- SRTP was failing to connect stream to Janus server — switched to RTP stream

- Managed to get an audio stream to work

- Have not yet been able to get video stream to work

- Reconsidering whether this is the appropriate technology

- Janus configuration and web interface has proven to be more difficult than anticipated

- Use the existing and working Toirt Samhlaigh immersive volume rendering tool

- Built on the Vrui VR infrastructure

- Existing collaboration feature

- Prototyping export to RSTP stream using FFmpeg API

- Will need to read head tracking data back from RSTP server

- DONE: Prototype code that taps into the HMD rendering prior to warping

- Built on the Vrui VR infrastructure

- TESTING: use Janus RSTP to WebRTX gateway server

- DONE: Built and configuring

- IN-PROGRESS: Create a WebRTC app that reads the stream

- WebXR browser interface to user

- PROTOTYPING: Three.JS program

- Read/Render 360 image stream from WebRTC

- Provide head-tracking back to Toirt Samhlaigh via Janus

- PROTOTYPING: Three.JS program

- Challenges (11/07/24):

- NOTE: the stripped boxes are all "in-progress" (prototyping) stage

Some recent development highlights:

- PARTIAL: Reverse Engineering the Volume Rendering algorithm in Toirt Samhlaigh

- I've been delving into the Toirt Samhlaigh code that does the volume rendering

- Adding comments as I understand it more

- Have extracted the primary vertex and fragment shaders

- Now building a small test program that distills the volume rendering

- TODO: Learn how to transfer the volume rendering techniques to a new ANARI backend

- Preparing for ANARI Hackathon (next week: October 21-23)

- Revamping some ANARI code in my test code

- Rebuilding all the ANARI code that has a new release in preparation for the Hackathon

- Next Week: ANARI Hackathon (will consume most of my time)

- Exploring better volume rendering on WebXR/WebGL

- Exploring some new volume rendering code, but not fully functional yet

- Exploring A-Frame networking

- Networked A-Frame on github

- Issues running with current version of npm

- Updated ILLIXR ANARI interface

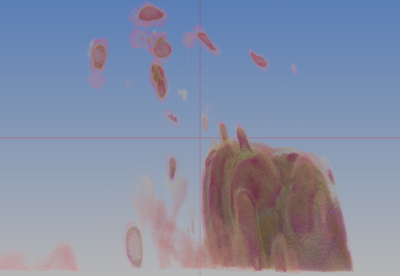

- includes writing a new example for the volume rendering (see image above)

- Reviewing volume rendering techniques from Toirt Samhlaigh

- The volume rendering implementation of Toirt Samhlaigh is consistently better than other volume rendering backends, so I've begun looking into either:

- Adding a streaming capability to Toirt Samhlaigh

- Learning how to transfer the volume rendering techniques to a new ANARI backend

- The volume rendering implementation of Toirt Samhlaigh is consistently better than other volume rendering backends, so I've begun looking into either:

Planned near-term work

- Prep for ANARI Hackathon (October 21-23)

- ANARI Hackathon Registration Form

- Explore new volume rendering

- Enhance ParaView interface

- Continue testing of A-Frame networking API

- Explore Trame combined with WebXR

- DONE: Create an ILLIXR volume rendering plugin

- Test on NCSA Illinois-Computes system

- Investigate AWS as possible web-host

Previous development highlights

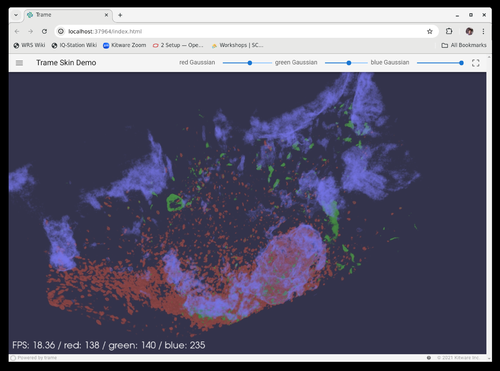

- Exploration of Trame

- open-source Python-based visualization web-server Kitware Trame

- works with VTK

- ported volume rendering example to Trame

- compared ANARI with VTK volume rendering

- ANARI is surprisingly slower

- VTK does a reasonable job of multi-channel rendering

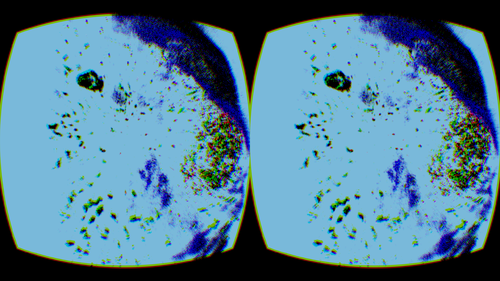

- Preliminary 360 sphere rendering experiments with WebXR

- testing from recorded 360

- need to develop a streaming methodology

- Updated ANARI port to ILLIXR

- next: port the volume visualization to an ILLIXR plugin

- Lots of work building existing tools on newly installed OS

- Time-consuming hassle to deal with incompatible versions of CUDA, etc.

- Successful build of new ILLIXR code

- Next step: recreate the ANARI plugin for ILLIXR

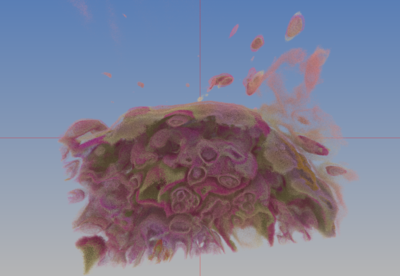

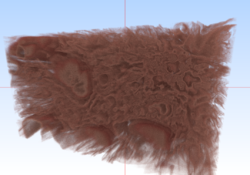

- Testing new Visionaray multi-volume rendering

- Performance has been improved by the Visionaray author

- Learned of a new OpenXR-remote rendering tool on the horizon

- Electric-Maple

- Exploring transfer functions that better match Steven's expectations

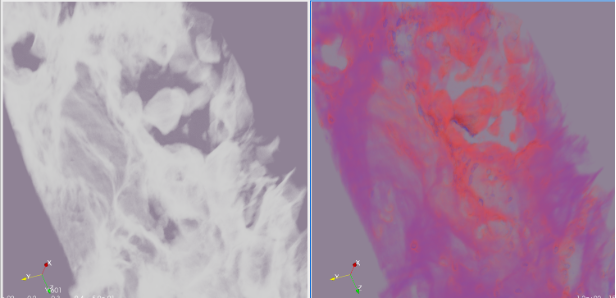

- Toirt Samhlaigh matches pretty well (see side-by-side above)

- Barney can render the channels separately

- (currently looking at operations needed to merge)

- Not yet working

- (currently looking at operations needed to merge)

- An OpenXR application that can render ANARI renderings in the HMD has been written

- Remote rendering of OpenXR applications to a Quest has been demonstrated

- Using WiVRn

- Tested with ANARI rendering, and it works!

- (Still need a CUDA renderer on host with WiVRn server for reasonable rendering rate)

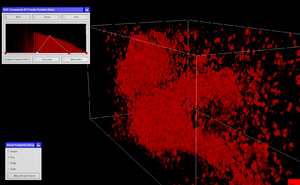

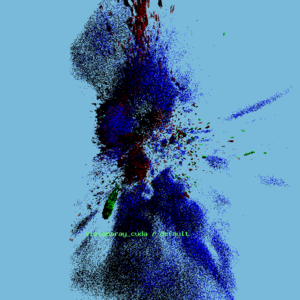

- At least one (perhaps two) ANARI backends now properly handle multiple overlapping volume rendering

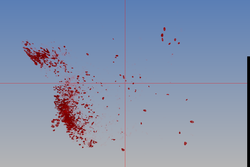

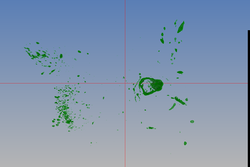

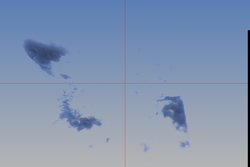

- The Visionaray (visionaray_cuda) ANARI backend has been tested — images below

- The Barney ANARI backend has reportedly been fixed, but has not (yet) been confirmed — is next on the list of tests

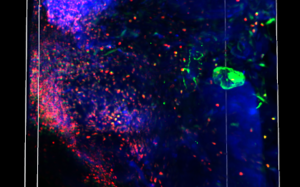

- The red,green,blue test volumes have been replaced by actual 3D microscope data (skin) provided by the Mayo Clinic. This is a new dataset, but generally similar to the original (a bit larger).

Remote rendering

- ANARI's "remote" rendering backend feature has been tested, and presently works in some circumstances, but not yet for the volume rendering.

- The author of the "remote" backend is currently refactoring the code, and my test application is being used as the Guinea Pig.

- HOWEVER: As the ANARI SDK is not designed to load data remotely, this path is infeasible -- otherwise would need to ship entire dataset over network each time the server is run (which could take hours).

- WebXR: Began investigating the use of WebXR as a delivery means

- HOWEVER: No browsers for Linux currently support WebXR

- WiVRn: Currently investigating the use of WiVRn as a tool to network OpenXR applications to phone-based headsets/HMDs, such as the Quest

- Software has been installed on Quest-1

- Have running WiVRN server on Linux Mint 21.3

- This did require an entire OS upgrade

- Looking at how to build on larger-memory machines

- Presently streams to local network

- Need to test with ssh-tunneling

- For security-concerned systems

- Needs a new technique to establish network communications

- The WiVRn authors seem open to adding a new technique

- Needs a new technique to establish network communications

- Electric-Maple:

- An in-process open-source alternative to 'WiVRn

- Also based on the Monado code-base (like WiVRn)

- Thus far only HMD interface implemented

- Advantage (once it works) is that it could run on security-concerned systems

- CloudXR:

- Am on the CloudXR testers list (communications with Greg Jones of NVIDIA)

- Also met one of the project leads at SIGGRAPH (Arjun Dube)

- ISSUE: CloudXR is presently just for OpenVR

- OpenXR is in planning stages, I will investigate when it becomes available.

- FYI: Jakob Bornecratz (formerly of Collabora, now NVIDIA) is working on this

- OpenXR is in planning stages, I will investigate when it becomes available.

- Am on the CloudXR testers list (communications with Greg Jones of NVIDIA)

Next on the list

- PARTIAL: Update the ANARI plugin for ILLIXR

- DONE: Finish the WiVRn build and test with OpenXR

- DONE: Develop an OpenXR-ANARI application

- Test the Barney ANARI backend for overlapping volume rendering

- POSTPONED: Target Date: August 7: Develop a WebVX interface suitable for viewing on Stand-alone & PC-VR systems

- PARTIAL: Will be investigated starting in September

- Shifted to use of WiVRn for streaming

- BEGUN: Also todo: Explore the prospects of an ANARI-Web rendering interface

- Testing with Trame

- PARTIAL:Update the ANARI interface to ILLIXR, and add the Mayo Clinic volume rendering as an example application

- ANARI port completed, still need to add a volume rendering scene

- Work on new ANARI camera that can render a 360 image over the "remote" interface

- Explore multi-rendering for CAVE and multi-client usage

- Begin developing a user interface that allows the user to select "blobs" as nuclei, etc.

- PARTIAL: Work on an OpenXR interface

- BEGUN: Begin paper Introduction and Previous Work sections

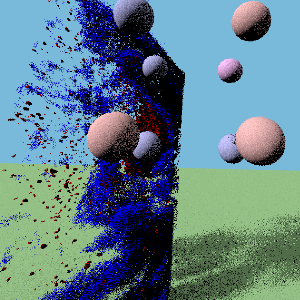

Some pictures

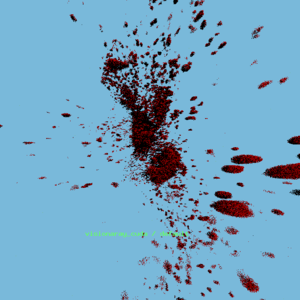

- Toirt Samhliagh rendering of new data — red-channel only

- ANARI-VR rendering of new data — red-channel only

- ANARI-VR rendering of new data — three-channel

Data

Much of the experimental work described here is based on a volumetric dataset created by a 3D microscope, which produces real-color images stacked into the volume. That dataset is too large to provide for quick downloads, so an alternative source for example datasets is provided here (though most are the standard single-scalar type).

Software

Software that has been tested with this dataset (though often with some method of size reduction employed) include:

- ParaView

- ANARI

- VisRTX ANARI backend

- Visionaray ANARI backend

- VTKm & VTKm-graph

- ANARI Volume Viewer

- Barney mutli-threaded renderer

- hayStack (Barney viewer)

- Barney's "BANARI" ANARI backend

- hayStack's "HANARI" ANARI backend

- PBRT code from Physically Based Rendering book

ParaView usage

hayStack usage

The hayStack application uses the multi-GPU Barney rendering library

to display volumes with interactive controls of the opacity map. It is

intended to be a simple application that serves as a proof-of-concept

for the Barney renderer. There are a handful of command line options

and runtime inputs to know:

% ...

where

- 4@ — ??

- -ndg — ??

Runtime keyboard inputs:

- ! — dump a screenshot

- C — output the camera coordinates to the terminal shell

- E — (perhaps) jump camera to edge of data

- T — dump the current transfer function as "hayMaker.xf"

Example output (RGB tests):

(Original example -- single channel)

Process

Python Data Manipulation Scripts

Step 1: Tiff to Tiff converter & extractor

This program converts the data from the original Tiff compression scheme to an LZW compression scheme, and at the same time can extract a subvolume and/or reduce the data samples of the volume.

Presently all parameters are hard-coded in the script:

- input_path

- reduce — sub-sample amount of the selected sub-volume

- startAt — beginning of the range to extract (along the X? axis)

- extractTo — end of the range to extract (along the X? axis)

Step 2: process_skin-<val>.py

This program reads the LZW-compressed Tiff file from step 1, and first extracts the R, G & B channels from the data. Using the RGB values, additional color attributes are calculated that can be used as scalar values that represent particular components of the full RGB color. Finally, the data is written to a VTK ".vti" 3D-Image file.

There are (presently) two versions of this file, the first ("process_skin.py") was hard-coded to the specific parameters of the early conversion tests. The second ("process_skin-e300.py") is being transitioned into one that can handle more "generic" (to a degree) inputs.

In the future, I will also be outputting "raw" numeric data for use with tools that only deal in the bare-bones data.

The current (hard-coded) parameters are:

- extract — the size of the original data (used to determine R,G,B spacing)

- gamma — the exponential curvature filter to apply to the data